In this series, we are going to show you how to build a hopper robot in ROS and make it learn to hop using reinforcement learning algorithm. The hopper robot simulation has been built in the last post. In case you didn’t follow it, you can find the post here.

Part 1

Use OpenAI to make a Hopper robot learn in Gazebo simulator, using ROS Development Studio. We will use Qlearning and Gym for that.

Step 1. Create a training package

Let’s create a package for training

cd ~/simulation_ws/src/loco_motion catkin_create_pkg my_hopper_training rospy

Then we create a launch file called main.launch inside the my_hopper_training/launch directory with the following content

<!--

Date of creation: 5/II/2018

Application created by: Miguel Angel Rodriguez <duckfrost@theconstructsim.com>

The Construct https://www.theconstruct.ai

License LGPLV3 << Basically means you can do whatever you want with this!

-->

<launch>

<!-- Load the parameters for the algorithm -->

<rosparam command="load" file="$(find my_hopper_training)/config/qlearn_params.yaml" />

<!-- Launch the training system -->

<node pkg="my_hopper_training" name="monoped_gym" type="start_training_v2.py" output="screen"/>

</launch>

To implement reinforcement learning, we’ll use an algorithm called q-learn. We’ll save the parameters for the q-learn algorithm as qlearn_params.yaml under the my_hopper_training/config directory with the following content

# Algortihm Parameters

alpha: 0.1

gamma: 0.8

epsilon: 0.9

epsilon_discount: 0.999 # 1098 eps to reach 0.1

nepisodes: 100000

nsteps: 1000

# Environment Parameters

desired_pose:

x: 0.0

y: 0.0

z: 1.0

desired_force: 7.08 # In Newtons, normal contact force when stanting still with 9.81 gravity

desired_yaw: 0.0 # Desired yaw in radians for the hopper to stay

max_height: 3.0 # in meters

min_height: 0.5 # in meters

max_incl: 1.57 # in rads

running_step: 0.001 # in seconds

joint_increment_value: 0.05 # in radians

done_reward: -1000.0 # reward

alive_reward: 100.0 # reward

weight_r1: 1.0 # Weight for joint positions ( joints in the zero is perfect )

weight_r2: 0.0 # Weight for joint efforts ( no efforts is perfect )

weight_r3: 1.0 # Weight for contact force similar to desired ( weight of monoped )

weight_r4: 1.0 # Weight for orientation ( vertical is perfect )

weight_r5: 1.0 # Weight for distance from desired point ( on the point is perfect )

In this post, we’ll focus on explaining the training script. Let’s create it under the my_hopper_training_src directory and call it start_training_v2.py with the following content

#!/usr/bin/env python

'''

Original Training code made by Ricardo Tellez <rtellez@theconstructsim.com>

Moded by Miguel Angel Rodriguez <duckfrost@theconstructsim.com>

Visit our website at ec2-54-246-60-98.eu-west-1.compute.amazonaws.com

'''

import gym

import time

import numpy

import random

import qlearn

from gym import wrappers

from std_msgs.msg import Float64

# ROS packages required

import rospy

import rospkg

# import our training environment

import monoped_env

if __name__ == '__main__':

rospy.init_node('monoped_gym', anonymous=True, log_level=rospy.INFO)

# Create the Gym environment

env = gym.make('Monoped-v0')

rospy.logdebug ( "Gym environment done")

reward_pub = rospy.Publisher('/monoped/reward', Float64, queue_size=1)

episode_reward_pub = rospy.Publisher('/monoped/episode_reward', Float64, queue_size=1)

# Set the logging system

rospack = rospkg.RosPack()

pkg_path = rospack.get_path('my_hopper_training')

outdir = pkg_path + '/training_results'

env = wrappers.Monitor(env, outdir, force=True)

rospy.logdebug("Monitor Wrapper started")

last_time_steps = numpy.ndarray(0)

# Loads parameters from the ROS param server

# Parameters are stored in a yaml file inside the config directory

# They are loaded at runtime by the launch file

Alpha = rospy.get_param("/alpha")

Epsilon = rospy.get_param("/epsilon")

Gamma = rospy.get_param("/gamma")

epsilon_discount = rospy.get_param("/epsilon_discount")

nepisodes = rospy.get_param("/nepisodes")

nsteps = rospy.get_param("/nsteps")

# Initialises the algorithm that we are going to use for learning

qlearn = qlearn.QLearn(actions=range(env.action_space.n),

alpha=Alpha, gamma=Gamma, epsilon=Epsilon)

initial_epsilon = qlearn.epsilon

start_time = time.time()

highest_reward = 0

# Starts the main training loop: the one about the episodes to do

for x in range(nepisodes):

rospy.loginfo ("STARTING Episode #"+str(x))

cumulated_reward = 0

cumulated_reward_msg = Float64()

episode_reward_msg = Float64()

done = False

if qlearn.epsilon > 0.05:

qlearn.epsilon *= epsilon_discount

# Initialize the environment and get first state of the robot

rospy.logdebug("env.reset...")

# Now We return directly the stringuified observations called state

state = env.reset()

rospy.logdebug("env.get_state...==>"+str(state))

# for each episode, we test the robot for nsteps

for i in range(nsteps):

# Pick an action based on the current state

action = qlearn.chooseAction(state)

# Execute the action in the environment and get feedback

rospy.logdebug("###################### Start Step...["+str(i)+"]")

rospy.logdebug("haa+,haa-,hfe+,hfe-,kfe+,kfe- >> [0,1,2,3,4,5]")

rospy.logdebug("Action to Perform >> "+str(action))

nextState, reward, done, info = env.step(action)

rospy.logdebug("END Step...")

rospy.logdebug("Reward ==> " + str(reward))

cumulated_reward += reward

if highest_reward < cumulated_reward:

highest_reward = cumulated_reward

rospy.logdebug("env.get_state...[distance_from_desired_point,base_roll,base_pitch,base_yaw,contact_force,joint_states_haa,joint_states_hfe,joint_states_kfe]==>" + str(nextState))

# Make the algorithm learn based on the results

qlearn.learn(state, action, reward, nextState)

# We publish the cumulated reward

cumulated_reward_msg.data = cumulated_reward

reward_pub.publish(cumulated_reward_msg)

if not(done):

state = nextState

else:

rospy.logdebug ("DONE")

last_time_steps = numpy.append(last_time_steps, [int(i + 1)])

break

rospy.logdebug("###################### END Step...["+str(i)+"]")

m, s = divmod(int(time.time() - start_time), 60)

h, m = divmod(m, 60)

episode_reward_msg.data = cumulated_reward

episode_reward_pub.publish(episode_reward_msg)

rospy.loginfo( ("EP: "+str(x+1)+" - [alpha: "+str(round(qlearn.alpha,2))+" - gamma: "+str(round(qlearn.gamma,2))+" - epsilon: "+str(round(qlearn.epsilon,2))+"] - Reward: "+str(cumulated_reward)+" Time: %d:%02d:%02d" % (h, m, s)))

rospy.loginfo ( ("\n|"+str(nepisodes)+"|"+str(qlearn.alpha)+"|"+str(qlearn.gamma)+"|"+str(initial_epsilon)+"*"+str(epsilon_discount)+"|"+str(highest_reward)+"| PICTURE |"))

l = last_time_steps.tolist()

l.sort()

rospy.loginfo("Overall score: {:0.2f}".format(last_time_steps.mean()))

rospy.loginfo("Best 100 score: {:0.2f}".format(reduce(lambda x, y: x + y, l[-100:]) / len(l[-100:])))

env.close()

We won’t go into detail to explain the q-learn algorithm. You can find a tutorial here if you are interested. You can simply copy and paste the following code into a file called qlearn.py and put it under the my_hopper_training/src directory

''' Q-learning approach for different RL problems as part of the basic series on reinforcement learning @ Inspired by https://gym.openai.com/evaluations/eval_kWknKOkPQ7izrixdhriurA @author: Victor Mayoral Vilches <victor@erlerobotics.com> ''' import random class QLearn: def __init__(self, actions, epsilon, alpha, gamma): self.q = {} self.epsilon = epsilon # exploration constant self.alpha = alpha # discount constant self.gamma = gamma # discount factor self.actions = actions def getQ(self, state, action): return self.q.get((state, action), 0.0) def learnQ(self, state, action, reward, value): ''' Q-learning: Q(s, a) += alpha * (reward(s,a) + max(Q(s') - Q(s,a)) ''' oldv = self.q.get((state, action), None) if oldv is None: self.q[(state, action)] = reward else: self.q[(state, action)] = oldv + self.alpha * (value - oldv) def chooseAction(self, state, return_q=False): q = [self.getQ(state, a) for a in self.actions] maxQ = max(q) if random.random() < self.epsilon: minQ = min(q); mag = max(abs(minQ), abs(maxQ)) # add random values to all the actions, recalculate maxQ q = [q[i] + random.random() * mag - .5 * mag for i in range(len(self.actions))] maxQ = max(q) count = q.count(maxQ) # In case there're several state-action max values # we select a random one among them if count > 1: best = [i for i in range(len(self.actions)) if q[i] == maxQ] i = random.choice(best) else: i = q.index(maxQ) action = self.actions[i] if return_q: # if they want it, give it! return action, q return action def learn(self, state1, action1, reward, state2): maxqnew = max([self.getQ(state2, a) for a in self.actions]) self.learnQ(state1, action1, reward, reward + self.gamma*maxqnew)

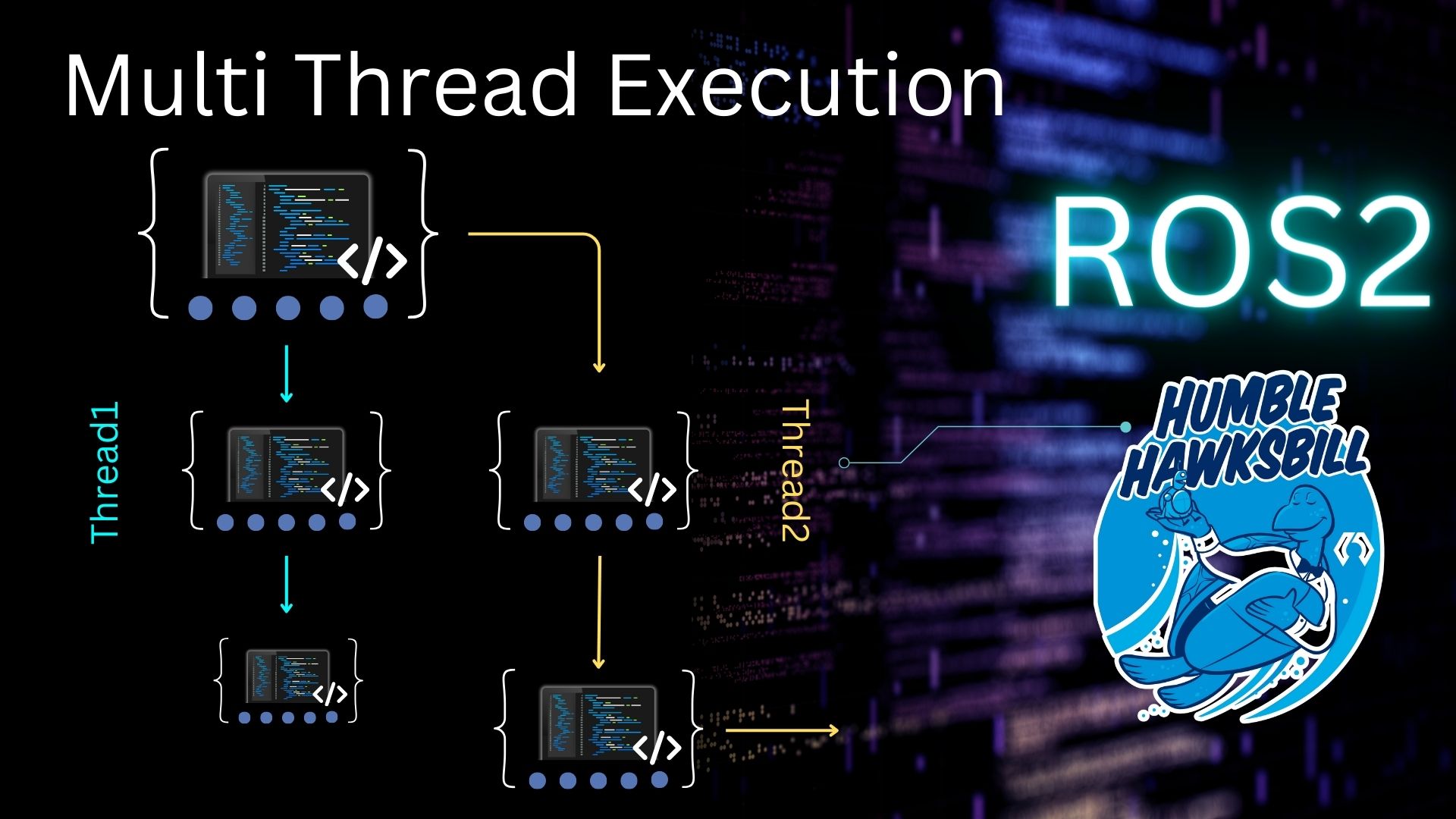

In the training script, we are basically doing the following step:

- create the training environment

- read q learn parameters from the parameter server

- try to get the highest reward with the q learn algorithm by deciding which action to take based on the current state for each timestep

That’s it for today. For the next post, we are going to explain how to build the gym training environment.

Edit by: Tony Huang

Here you will find all the code:

https://bitbucket.org/theconstructcore/hopper/src/master/

Or use directly the project of ROSDevelopementStudio:

https://rds.theconstructsim.com/tc_projects/use_project_share_link/f162963c-5651-460a-bab0-b1cd45607103

Check Out this OpenAI course in RobotIgnite Academy for learning the basics step by step:

https://wp.me/P9Rthq-1UZ

Hi, I’m new in all this of RL. I was trying to run the monoped training, with almost the same code and scripts. The problem is that it fails when launching the ‘main.launch’ file from the my_hopper_traning pkg. This is the error message that appears in the terminal:

process[monoped_gym-1]: started with pid [11594]

Traceback (most recent call last):

File “/home/estradav/Documents/Coding/ROS/The_Construct/construct_ws/src/monoped_ai/src/start_training_v2.py”, line 25, in

env = gym.make(‘Monoped-v0’)

File “/home/estradav/.local/lib/python3.8/site-packages/gym/envs/registration.py”, line 669, in make

env = PassiveEnvChecker(env)

File “/home/estradav/.local/lib/python3.8/site-packages/gym/wrappers/env_checker.py”, line 24, in __init__

assert hasattr(

AssertionError: The environment must specify an observation space. https://www.gymlibrary.dev/content/environment_creation/

[monoped_gym-1] process has died [pid 11594, exit code 1, cmd /home/estradav/Documents/Coding/ROS/The_Construct/construct_ws/src/monoped_ai/src/start_training_v2.py __name:=monoped_gym __log:=/home/estradav/.ros/log/2f405284-6f6a-11ed-a671-ad0e76e7c992/monoped_gym-1.log].

log file: /home/estradav/.ros/log/2f405284-6f6a-11ed-a671-ad0e76e7c992/monoped_gym-1*.log

all processes on machine have died, roslaunch will exit

shutting down processing monitor…

… shutting down processing monitor complete

done

I would like to know if anyone has had the same problem and what I can do to solve the error. Thanks!