Course

Reinforcement Learning for Robotics

Learn the theory behind the most used path planning algorithms.

Course Overview

The course will give you the state-of-the-art opportunity to be familiar with the general concept of reinforcement learning and to deploy theory into practice by running coding exercises and simulations in ROS.

In this course, you are going to learn about: reinforcement learning concepts applicable to robotics, understand the fundamental principles of Markov Decision Process, Bellman equation, Dynamic Programming, Monte Carlo methods, SARSA, and Q- learning algorithms. You will understand the core of artificial intelligence and capture sufficient knowledge to understand how the robots think. Advance your skills in Python programming and math.

Simulation Robots Used

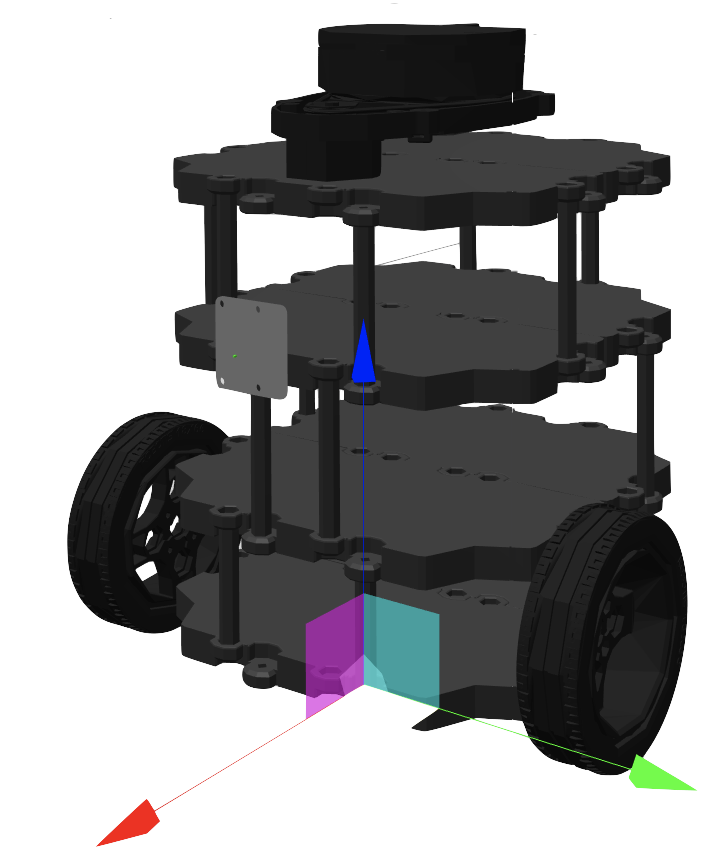

TurtleBot 2, Parrot Drone

Intermediate

20 hours

Prerequisites

COURSE CREATOR

Markus Buchholz

Ph.D. in Robotics and M.Sc in Electronics and Computer Science and M.sc in Economics. His main passion is programming (C++, Python) the Autonomous Systems by use of AI, Deep Learning, and Reinforcement Learning.

What exercises will you be doing

Conditional Statements and Loops

Train a drone in order to reach a certain destination goal.

Dynamic Programming

Find the optimal path for a TurtleBot robot applying Dynamic Programming techniques.

Monte Carlo methods

Compute the optimal path for a drone using Monte Carlo methods.

Temporal-Difference methods

Test the SARSA and Q-learn algorithms in order to train a drone in finding the optimal path.

Course Project

Deploy the Q-learning algorithm to solve a maze environment with 3 obstacles for a flying drone.

Course Summary

Unit 1: Introduction to the Reinforcement Learning for Robotics Course

A brief introduction to the concepts you will be covering during the course.

Unit 2: The reinforcement learning problem

Learn some reinforcement learning basic concepts and terminology.

Unit 3: Dynamic Programming problem

Learn about the dynamic programming (DP) concept, which in our case is tailored for solving reinforcement learning problems – Bellman equations.

Unit 4: Monte Carlo methods

In this unit, you are going to continue the discussion about optimal policies, which the agent evaluates, improves, and follows through Monte Carlo methods.

Unit 5: Temporal-Difference methods

In this unit, you are going to continue your journey of finding the most optimal way to solve MDP, for the environment where the dynamics (transitions) are unknown in advance (model-free reinforcement learning).

Course Project

In this final project, your task is to deploy a Q-learning algorithm to solve a maze environment with 3 obstacles.

Ready to have this ROS skill?

Start learning online quickly and easily

Top universities choose The Construct for Campus to teach ROS & Robotics.

![project1 (1) [ROS Q&A] 168 - What are the differences between global and local costmap](https://www.theconstruct.ai/wp-content/uploads/2021/05/project1-1.png)

![project2 (1) [ROS Q&A] 168 - What are the differences between global and local costmap](https://www.theconstruct.ai/wp-content/uploads/2021/05/project2-1.png)

![project3 (1) [ROS Q&A] 168 - What are the differences between global and local costmap](https://www.theconstruct.ai/wp-content/uploads/2021/05/project3-1.png)

![project4 (1) [ROS Q&A] 168 - What are the differences between global and local costmap](https://www.theconstruct.ai/wp-content/uploads/2021/05/project4-1.png)

![project5 (1) [ROS Q&A] 168 - What are the differences between global and local costmap](https://www.theconstruct.ai/wp-content/uploads/2021/05/project5-1.png)