In this post you will see how to launch very easily a simulation in Gazebo with human crowd simulation and your most loved robot: Jetbot! This will be used as starting point for the next videos for doing people recognition, tracking, navigation and much more.

In order to follow the post, you must have the following rosject:

https://app.theconstructsim.com/#/l/3dd2dffb/

Launch the simulation

First thing after having the dekstop running, open a webshell and launch the simulation with the command below:

roslaunch jetdog_examples pedestrians_1.launch

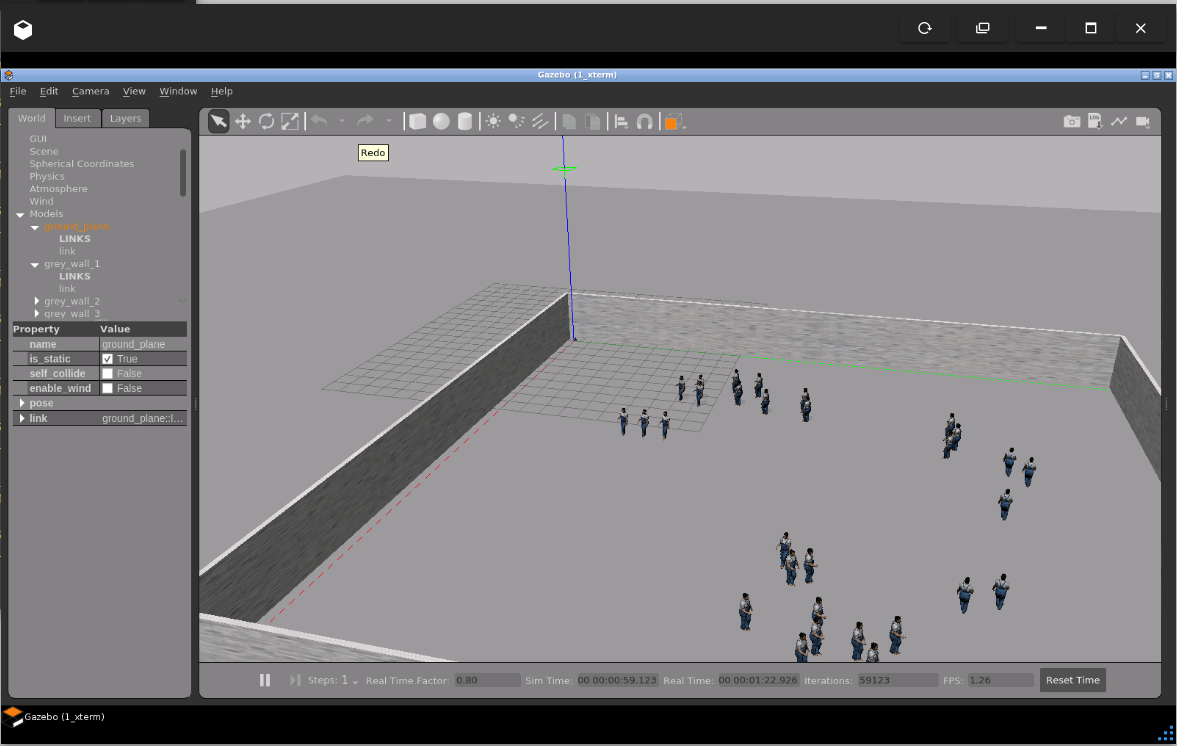

Open gazebo and you must have the simulation running. Notice the robot lies on the origin of the world, one of the wall corners.

Check data available from the simulation in RViz

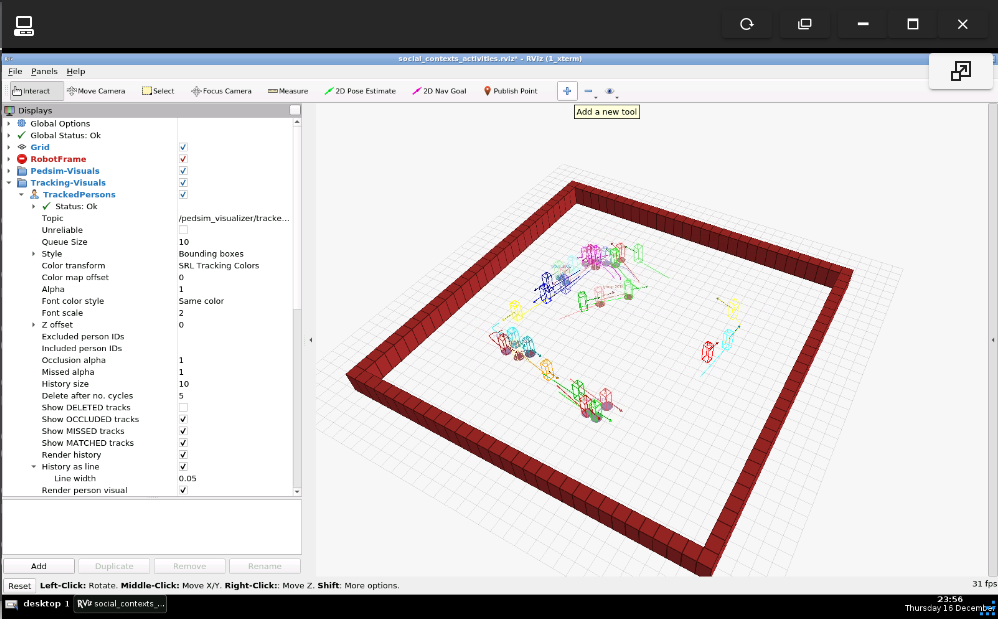

Open the graphical tools and maximize RViz. The people moving around should be visible in RViz, that’s because gazebo itself is publishing such information.

At this moment, the camera should be centralized using the topic that controls it, execute the command below:

rostopic pub /jetdog/camera_tilt_joint_position_controller/command \

std_msgs/Float64 "data: 0.0"

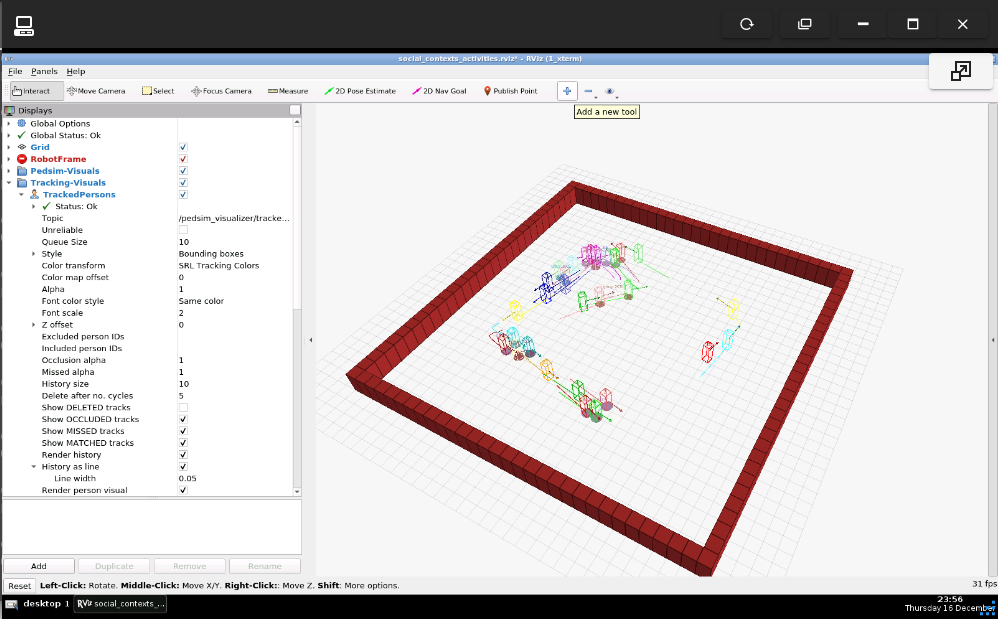

And the camera should point to the people that are moving around:

That’s the basic setup that will be used in the next posts to detect people in the simulation using the robot.

0 Comments